Artificial intelligence firm Anthropic has introduced a new “memory” capability for its Claude AI models, enabling the system to retain contextual information across conversations. The move marks a strategic expansion into more persistent, personalized AI applications — a critical battleground in the enterprise AI race.

The development positions Claude as a more adaptive digital assistant, capable of remembering user preferences, project details, and prior instructions, significantly enhancing usability in professional environments.

What the Claude Memory Feature Enables

The newly opened memory function allows Claude to:

- Retain user-defined preferences

- Store recurring instructions

- Recall project-specific context

- Adapt responses based on historical interactions

This shifts Claude from a session-based chatbot to a persistent AI assistant, designed for ongoing workflows rather than isolated queries.

In practical terms, enterprise users can expect:

- Reduced repetition in instructions

- Faster task execution

- More tailored outputs

- Improved contextual accuracy over time

Persistent memory has become a key differentiator in generative AI, especially for productivity, legal drafting, research, and customer support use cases.

Strategic Significance for Anthropic

Founded by former OpenAI researchers, Anthropic has positioned itself as a safety-focused AI developer. The introduction of memory features signals confidence in managing privacy, data governance, and enterprise security — core concerns in AI adoption.

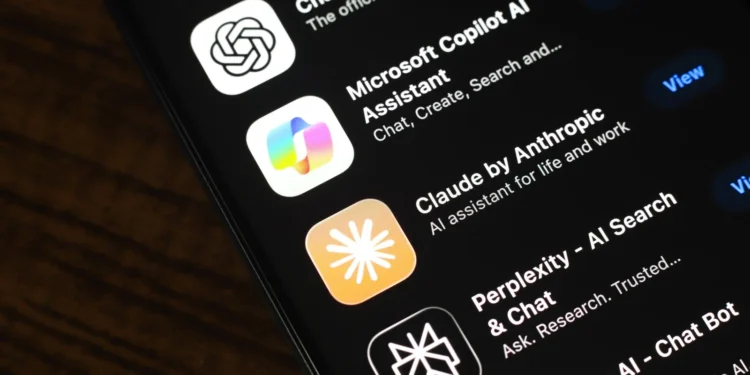

The move also intensifies competition with major AI players, including:

- OpenAI

- Microsoft

Memory functionality is particularly valuable in enterprise subscriptions, where clients seek AI systems that integrate into long-term workflows rather than operate as standalone tools.

By enabling memory, Anthropic increases Claude’s stickiness within corporate ecosystems.

Enterprise Adoption Implications

Productivity Gains

Persistent AI memory reduces repetitive prompts and contextual explanations, improving efficiency across:

- Legal teams drafting contracts

- Analysts generating recurring reports

- Developers refining code

- Customer service teams handling client histories

This has the potential to boost enterprise productivity while lowering operational friction.

Data Governance Considerations

However, memory-enabled AI introduces heightened data governance concerns:

- Where is memory stored?

- How is it encrypted?

- Who controls deletion rights?

- Can sensitive corporate data be exposed?

Regulated industries — finance, healthcare, and government — will likely scrutinize these features closely.

Impact on Global Markets

The expansion of AI memory capabilities strengthens the broader narrative of generative AI maturing into enterprise infrastructure.

Markets may respond in several ways:

- Increased valuation support for AI-focused firms

- Rising demand for AI cloud infrastructure

- Growth in cybersecurity and data compliance sectors

Persistent AI assistants could drive additional enterprise spending, reinforcing revenue streams for AI platform providers and cloud operators.

As AI shifts from experimentation to integration, capital expenditure in AI systems is expected to remain elevated.

Impact on India

India stands to benefit indirectly from this development.

IT Services and Integration

Indian IT giants and SaaS startups may see increased demand for:

- AI integration services

- Workflow automation consulting

- Enterprise AI customization

India’s technology sector is deeply embedded in global enterprise systems. Memory-enabled AI tools could accelerate outsourcing and automation projects.

Startup Ecosystem

Indian AI startups may also explore:

- Localized AI memory tools

- Industry-specific AI assistants

- Compliance-ready enterprise AI solutions

As enterprises in India adopt generative AI more widely, demand for safe and scalable solutions will grow.

Impact on Investors

AI and Cloud Stocks

Investors tracking AI infrastructure and software providers may interpret this move as:

- Evidence of rapid product iteration

- Increased enterprise monetization potential

- Higher competitive intensity

Memory features enhance subscription value, potentially improving recurring revenue models.

Competitive Pressure

However, competitive risks remain:

- Rapid imitation by rivals

- Price competition

- Regulatory intervention in AI data storage

The AI market is evolving quickly, and differentiation windows are narrowing.

Impact on Consumers

For individual users, memory-enabled AI can provide:

- More personalized responses

- Continuity across sessions

- Better learning of preferences

However, privacy concerns are paramount.

Users will demand:

- Clear data transparency

- Easy memory management controls

- Secure storage assurances

Consumer trust will be critical to widespread adoption.

Regulatory and Ethical Landscape

Persistent AI memory intersects with global data protection laws, including:

- European data privacy frameworks

- US state-level regulations

- Emerging AI governance guidelines

Regulators are increasingly focused on:

- Data retention policies

- Transparency in AI training and storage

- User consent mechanisms

Anthropic’s safety-oriented positioning may help mitigate regulatory risk, but scrutiny will likely intensify as AI systems become more personalized.

Competitive Outlook

The AI industry is transitioning from:

- Model performance benchmarking

to - Product ecosystem differentiation

Memory capability is a foundational layer for:

- Autonomous AI agents

- Enterprise workflow automation

- Long-term digital assistants

The next competitive phase may revolve around:

- Integration depth

- Security guarantees

- Custom enterprise deployment

Companies that can combine personalization with robust governance controls will likely dominate.

Conclusion

Anthropic’s introduction of persistent memory for Claude marks a meaningful step in the evolution of generative AI from experimental chatbot to enterprise-grade assistant.

The move strengthens the competitive landscape in AI, raises new data governance questions, and signals continued capital investment in AI infrastructure.

As enterprises seek smarter, context-aware systems, memory-enabled AI may become a standard expectation rather than a premium feature.